https://dehe25.itch.io/ai-gamemaster-se2/purchase

AI GameMaster SE2 is still in alpha stage. It is not feature-complete yet, and bugs may occure. See “ToDo” for more informations about the final product.

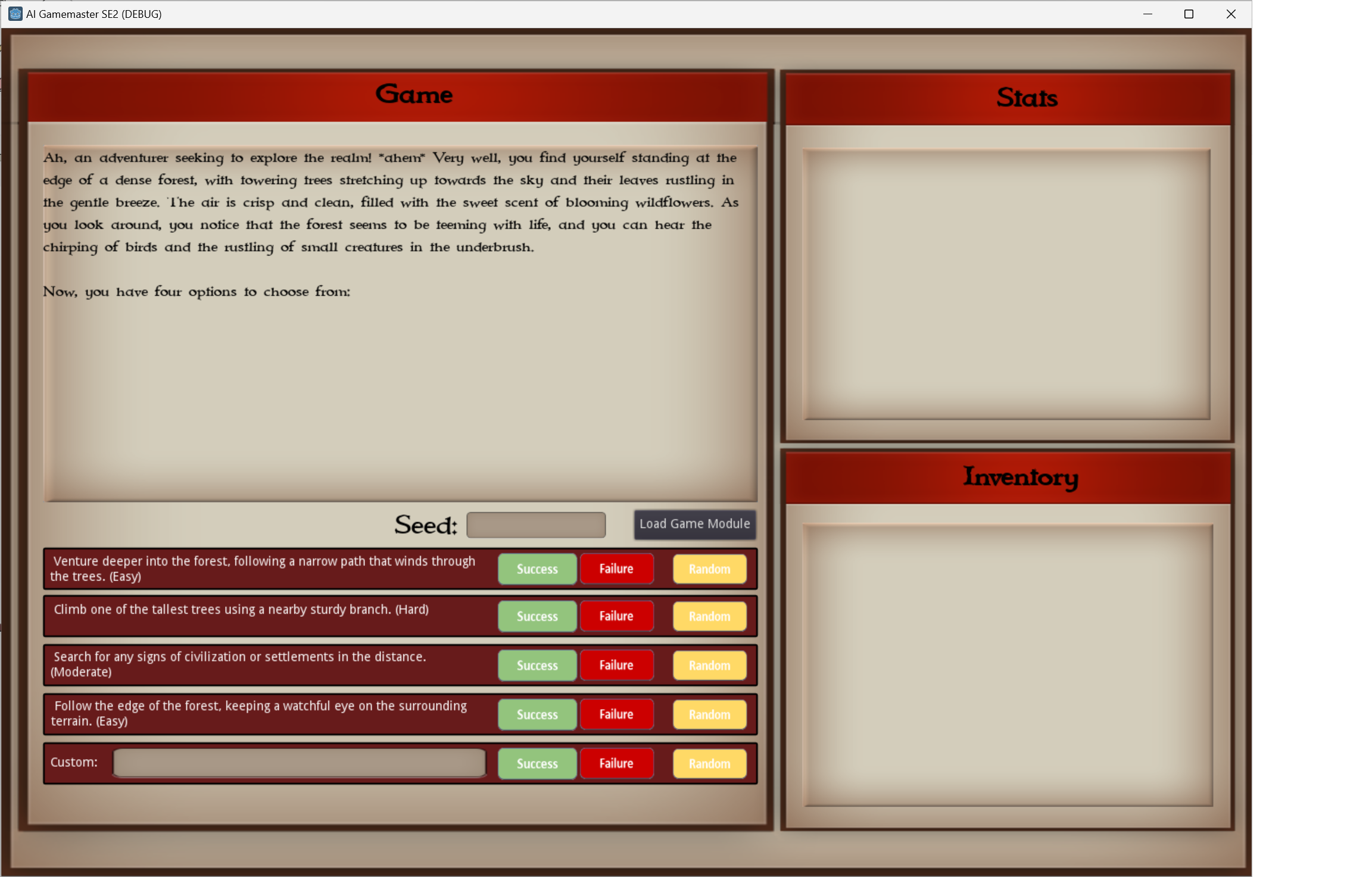

AI GameMaster is an AI driven oracle for solo pen&paper RPG sessions.

It can query any AI LLM API to create the story flow.

Requirements:

-ollama for a self hosted AI

-Dotnet Framework 8.0 for the game module and the api endpoint generator

(Both can be found in the .

Requirements folder)

For a locally hosted API using ollama, a mid-tier graphics card like an RTX-3060 with ar least 8GB video ram is required.

Project Description:

Currently, game modules for ollama using llama2-7B as well as for using Anthropic Claude are included. You can find the modules in the .

Presets

folder.

API endpoints and settings are saved in .apisettings.json files, and game modules (which do include an API endpoint) are saved in .gamedata.json files.

Programs for editing the API- and gamedata settings can be found in the “.

Game Editor” folder.

Upon start, the default API endpoint for ollama will be loaded. Most likely, you won’t have the required model pulled. To pull it, hit “Load Game Module”.

Click on the “…” button and select the file .

Presets

ollama.gamedata.json.

Afterwards, click on “Pull Model”. Pulling the model may take several minutes.

When using a Web API, you will most likely need an API key.

For example, when using the Anthropic Claude API, you would open .

Presets

claude.gamedata.json from the “Load Game Module” menu.

Then you would enter your API key in the corresponding field, and click “OK”.

When using a web API, “Pull Model” is not required.

Please not that API usage can drain your budget very fast. A mid-length session can cost up to 5$ and more when using Anthropic Claude. These costs will be reduced as soon as the history limiting has been implemented.

Usage:

After a short loading period, you will see the current state of the game in the large window at the upper left.

4 default choices will be shown that correspond the current scenery.

What you do now is to roll your dice IRL according to the difficulty displayed and the requirements that are set through your ruleset.

Then you calculate if it is a win or a loss, and hit the corresponding “Succcess” or “Failure” button.

You may also leave the result to the AI by clicking the corresponding “Random” button.

The 5. possibility is to enter your own command. Start it with “I do " and describe your desired action. Afterwards, click on “Success”, “Failure” or “Random” in the fifth row.

(in the alpha release, all choices for own commands will lead to “Random”)

ToDo:

-Add rolling of enemy stats on fights

-Add “Success” and “Failure” for custom commands

-Add stats (int, str, sta etc.)

-Add inventory and loot

-Add support for custom seeds.

-Add variables support for game modules

-Add streaming support. This will display the results of queries to the AI in a fluent way, instead of waiting for the whole answer to load.

-Add optional text completition support. The current, chat based approach leads to an encounter based chain of events. In case of Claude, this is quite ok but when using ollama it is rather boring.

-Limit the history size to 5 entries to reduce API usage costs

Changelog:

Alpha 1.0.1 - Fixed a bug where settings in “Loag Game Module” were not saved

Alpha 1.1.0 - Implemented LUA scripting

-Fixed Game Data / API Generators

-Fixed default.gamedata.json (it contained the wrong API endpoint)

AI GameMaster SE2

2024-07-06